Revitalizing Rsyslog with Docker: A New Era of Log Management

We’re excited to announce a renewed focus on the rsyslog Docker project, bringing you robust, flexible, and easy-to-use containerized solutions for your logging needs. This isn’t just a refresh; it’s a reimagining of how rsyslog can integrate into modern, containerized environments.

rsyslog on AWS – Update an existing CloudFormation stack

Welcome to this guide on updating an existing CloudFormation stack for the rsyslog server on AWS. In this tutorial, we will walk you through the steps necessary to ensure your rsyslog server is running the latest version with all the benefits of updated features and performance improvements. We will provide detailed instructions and screenshots to make the update process straightforward, ensuring minimal disruption to your logging setup. Whether you’re a seasoned AWS user or new to CloudFormation, this guide will help you achieve a smooth and efficient update.

Prerequisites

If changes were made to the rsyslog configuration, use the guide in this article to back up and restore configuration: AWS rsyslog Sync Configuration with S3.

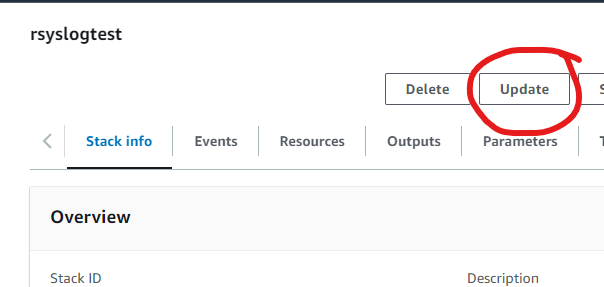

Step 1: Select the CloudFormation Stack

To begin the update process for your rsyslog server on AWS, first, navigate to the AWS Management Console and go to the CloudFormation section. Here, locate the stack you wish to update.

- Visit AWS CloudFormation: Log in to your AWS Management Console and go to the CloudFormation service.

- Select Your Stack: Identify and select the CloudFormation stack for your rsyslog server. In this example, the stack is named “rsyslogtest”.

- Initiate Update: Click on the Update button, as highlighted in the screenshot above.

This will start the process to update your existing CloudFormation stack.

Click Update to proceed.

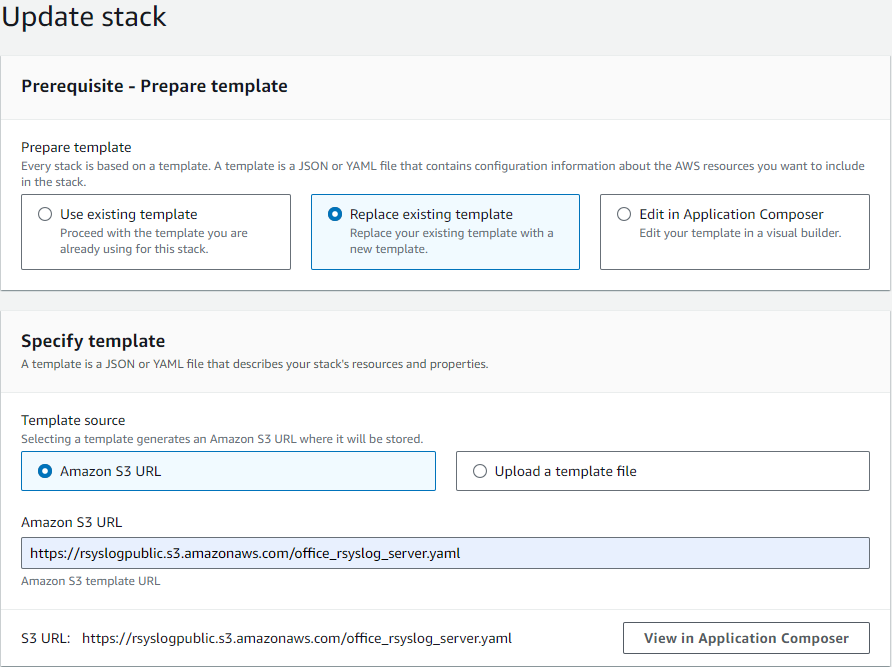

Step 2: Prepare the Template

After selecting the stack to update, the next step involves preparing the template for the update. Follow these instructions:

- Choose Template Option: In the “Prepare template” section, select the Replace existing template option.

- Specify Template Source: Under “Template source”, choose Amazon S3 URL.

- Enter S3 URL: Enter the following URL in the provided field:

https://rsyslogpublic.s3.amazonaws.com/office_rsyslog_server.yamlAlternatively, you can use the template URL provided on the AWS Marketplace product page for the rsyslog server.

This will prepare the new template to be applied to your existing stack.

Click Next to proceed.

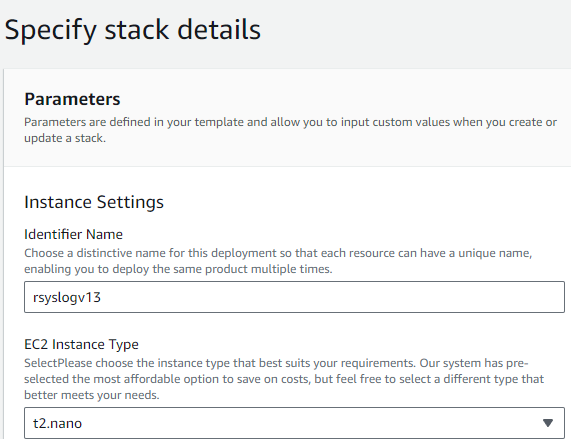

Step 3: Specify Stack Details

After preparing the template, proceed to specify the stack details:

- Review Parameters: Ensure all parameters are correct. Adjust as necessary.

- Instance Settings:

- Identifier Name: Change if necessary.

- EC2 Instance Type: Change if necessary, as a new instance will be deployed.

Review all options carefully in case new features have been added.

Once all configurations are reviewed and adjusted, click Next to proceed.

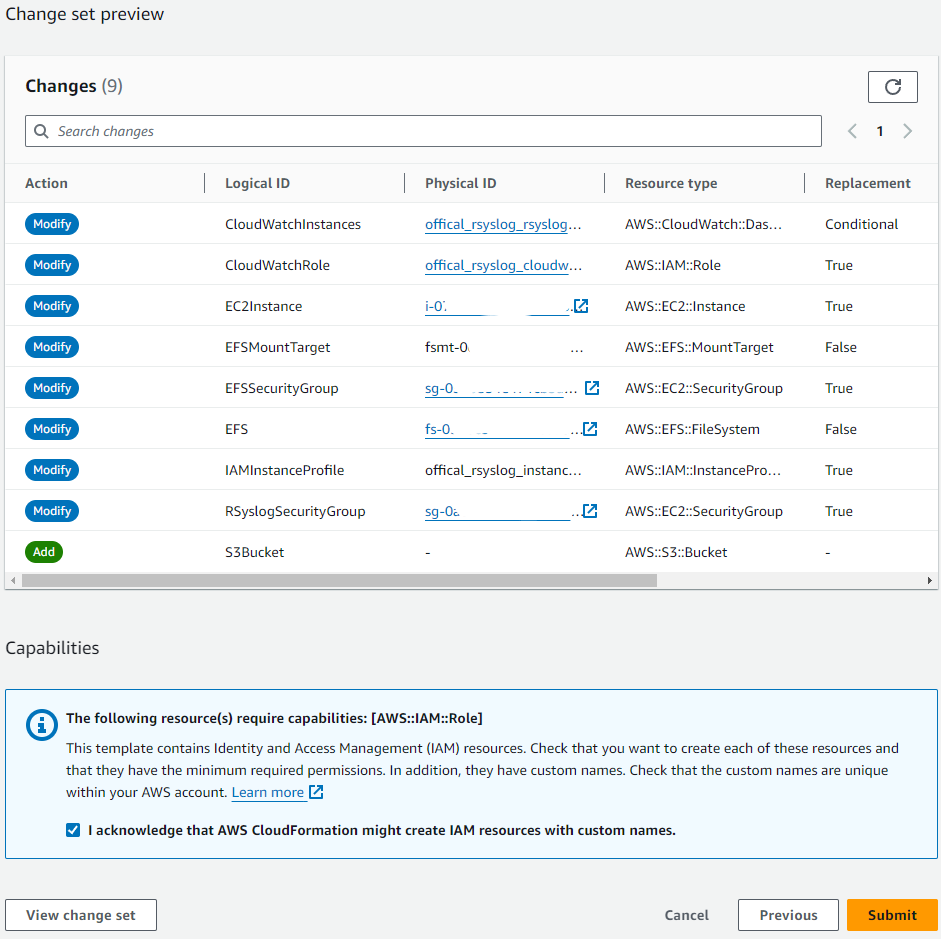

Step 4: Configure Stack Options and Review

Review the stack options and make any necessary adjustments.

- Review Changes: Carefully review the list of changes in the “Change set preview”. Ensure all modifications align with your expectations.

- Submit: Once everything is reviewed and confirmed, click the Submit button to start the update process.

After clicking Submit, AWS will begin updating your CloudFormation stack. Monitor the progress to ensure the update completes successfully. If any issues arise, refer to the stack events for troubleshooting.

Step 5: Monitor the Update Process

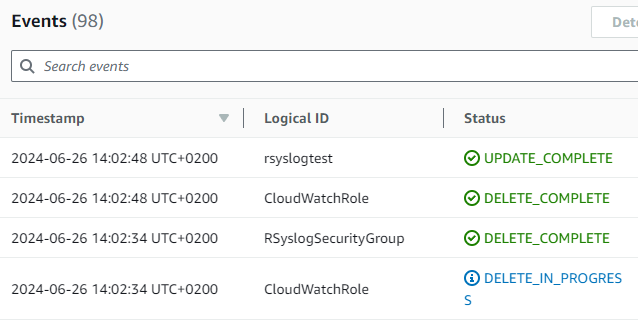

- Monitor Progress: Check the events tab to monitor the progress of the update. The status should show “UPDATE_IN_PROGRESS” and various components being modified.

- Confirm Completion: When the update completes, ensure the status changes to “UPDATE_COMPLETE”.

Once the process is complete, verify that the CloudFormation stack was updated successfully by checking the final status and confirming that all intended changes were applied correctly.

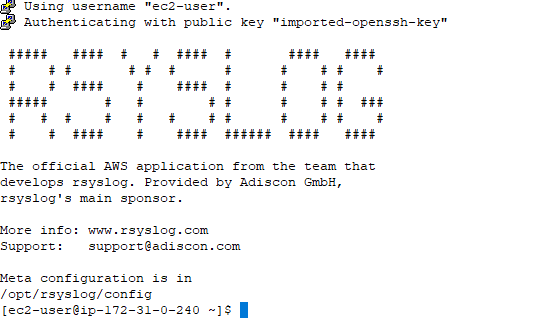

Confirm EC2 Instance Running rsyslog Server

- Access the EC2 Instance: Use SSH to log in to your EC2 instance running the rsyslog server.

- Verify rsyslog: Once logged in, confirm that the rsyslog server is running properly. You should see the rsyslog welcome message, indicating that the application is installed and operational.

Check the rsyslog meta configuration located in /opt/rsyslog/config to ensure all settings are correct and the service is functioning as expected. This final verification confirms the successful update of your CloudFormation stack and the deployment of the new rsyslog server instance.

AWS rsyslog – Message Drop Filter

The rsyslog message drop filter feature allows you to delete unwanted messages by hostname and tag. This filtering capability is always enabled and can be easily configured by updating a simple JSON file.

To modify the drop rules, follow these steps:

- Log in to the instance where the rsyslog application is installed.

- Navigate to the configuration folder located at /opt/rsyslog/config.

- Use your preferred text editor (such as nano) to open the file named “drop_by_host_tag.lt”. Note: You will need root permissions to edit this file (for example, you can use the command “sudo nano drop_by_host_tag.lt“).

- Make the necessary changes to the file based on your filtering requirements.

The sample file looks as follows:

{ "version" : 1,

"nomatch" : "0",

"type" : "string",

"table" : [

{"index" : "localhost dropme", "value" : "1" },

{"index" : "localhost drop-me", "value" : "1" }

]

}The parts in italics are the actual filters and the parts in bold are the data filtered against.

When configuring filters for the “message drop filter” class in rsyslog, it’s important to understand that each filter consists of two parts: the hostname and the tag.

The first part specifies the hostname to filter for (for example, “localhost”). It’s crucial to note that there should be exactly one space character separating the hostname and the tag. If no space character is given or more than one space is given, the filter will not match any messages.

The second part specifies the message’s syslog tag (for example, “dropme”). It’s essential to keep in mind that neither the hostname nor the tag can contain spaces or any other whitespace characters. This is because such characters are not permitted in hostnames and tags by the relevant RFC, and as such, they will never occur.

If spaces are included, the filter will not match any messages, rendering it ineffective. To ensure that your “message drop filter” class filters work properly, make sure to avoid using spaces or any other whitespace characters in your hostname or tag.

It’s important to note that neither the hostname nor tag can contain any spaces or other whitespace characters. This is because such chaspaces or any other whitespace characters in your hostname or tag.

When done editing the file, ensure that each “index” line except the last one ends with a comma. After saving, you can also do a check of the overall configuration by running “rsyslogd -N1” on the command line. Please note that rsyslogd must be sent a HUP to activate the changes.

In later stages of the beta build process, we will at least partly automate the post-edit check and activation procedures.

How to perform a mass rollout?

A mass rollout in the scope of this topic is any case where the product is rolled out to more than 5 to 10 machines and this rollout is to be automated. This is described first in this article. A special case may also be where remote offices shall receive exact same copies of the product (and configuration settings) but where some minimal operator intervention is acceptable. This is described in the second half of this article.

The common thing among mass rollouts is that the effort required to set up the files for unattended distribution of the configuration file and product executable is less than doing the tasks manually. For less than 5 systems, it is often more economical to repeat the configuration on each machine, but this depends on the number of rules and their complexity. Please note that you can also export and re-import configuration settings, so a hybrid solution may be the best when a lower number of machines is to be installed (normal interactive setup plus import of pre-created configuration settings).

Automated Rollout

The basic idea behind a mass rollout is to create the intended configuration on a master (or baseline) system. This system holds the complete configuration that is later to be applied to all other systems. Once that system is fully configured, the configuration will be transferred to all others.

The actual transfer is done with simple operating system tools. The complete configuration is stored in the the registry. Thus, it can be exported to a file. This can be done with the client. In the menu, select “Computer”, then select “Export Settings to Registry File”. A new dialog comes up where the file name can be specified. Once this is done, the specified file contains an exact snapshot of that machine’s configuration.

This snapshot can then be copied to all other machines and put into their registries with the help of regedit.exe.

An example batch file to install, configure and run the service on “other” servers might be:

copy \\servershare\rsyslogcl.exe c:\some-local-dir copy \\servershare\rsyslogcl.pem c:\some-local-dir cd \some-local-dir rsyslogcl -i regedit /s \server\share\configParms.reg net start "RSyslog Windows Agent"

The file “configParams.reg” would be the registry file that had been exported with the configuration client.

Of course, the batch file could also operate off a CD – a good example for DMZ systems which might not have Windows networking connectivity to a home server.

Please note that the above batch file fully installs the product – there is no need to run the setup program at all. All that is needed to distribute the service i.e. rsyslogcl.exe and its helper dlls, which are the core service. For a locked-down environment, this also means there is no need to allow incoming connections over Windows RPC or NETBIOS for an engine only install.

Please note that, in the example above, “c:\some-local-dir” actually is the directory where the product is being installed. The “rsyslogcl -i” does not copy any files – it assumes they are already at their final location. All “rsyslogcl -i” does is to create the necessary entries in the system registry so that the Rsyslog Windows Agent is a registered system service.

Branch Office Rollout with consistent Configuration

You can use engine-only install also if you would like to distribute a standardized installation to branch office administrators. Here, the goal is not to have everything done fully automatic, but to ensure that each local administrator can set up a consistent environment with minimal effort.

You can use the following procedure to do this:

- Do a complete install on one machine.

- Configure that installation the way you want it.

- Create a .reg file of this configuration (via the client program).

- Copy the rsyslogcl.exe, rsyslogcl.pem and .reg file that you created to a CD (for example). Take these executable files from the install directory of the complete install done in step 1 (there is no specfic engine-only download available).

- Distribute the CD.

- Have the users create a directory where they copy all the files. This directory is where the product is installed in – it may be advisable to require a consistent name (from an admin point of view – the product does not require this).

- Have the users run “rsyslogcl -i” from that directory. It will create the necessary registry entries so that the product becomes a registered service.

- Have the users double-click on the .reg file to install the pre-configured parameters (step 3).

- Either reboot the machine (neither required nor recommended) or start the service (via the Windows “Servcies” manager or the “net start” command).

Important: The directory created in step 6 actually is the program directory. Do not delete this directory or the files contained in it once you are finished. If you would do, this would disable the product (no program files would be left on the system).

If you need to update an engine-only installation, you will probably only upgrade the master installation and then distribute the new exe files and configuration in the same way you distributed the original version. Please note that it is not necessary to uninstall the application first for an upgrade – at least not as long as the local install directory remains the same. It is, however, vital to stop the service, as otherwise the files can not be overwritten.

RSyslog Windows Agent license document – EULA

RSyslog Windows Agent

Version 8.1 Final Release

End User License Agreement

2025-07-14

This binary code license (“License”) contains rights and restrictions associated with use of the accompanying software and documentation (“Software”). Read the License carefully before installing the Software. By installing the Software you agree to the terms and conditions of this License.

1. Limited License Grant.

Adiscon grants you a non-exclusive License to use the software free of charge for 30 days for the purpose of evaluating whether to purchase a commercial license of RSyslog Windows Agent. After this period, users are required to purchase proper licenses if you continue to use it. If Customer has purchased RSyslog Windows Agent licenses, Customer is permitted to use the purchased product edition under the terms of this license agreement.

2. Copyright

The software is confidential copyrighted information of Adiscon GmbH, Germany. You shall not modify, decompile, disassemble, decrypt, extract, or otherwise reverse engineer the software. The software may not be leased, assigned, or sublicensed, in whole or in part. A separate license is required for each computer being monitored by the RSyslog Windows Agent.

3. Trademarks and logos

RSyslog Windows Agent is a trademark of Adiscon. Windows is a registered trademark of Microsoft Corporation. All other trademarks and service marks are the property of their respective owners.

4. Licensed syslog

A RSyslog Windows Agent license covers the installation of one system. RSyslog Windows Agent comes in several editions, only the Enterprise Version is permitting the Syslog Listener Service. The RSyslog Windows Agent Enterprise Edition permits you to receive messages from an unlimited number of devices. Please note that instances of Adiscon’s EventReporter and/or RSyslog Windows Agent do NOT count against the remote device count. So you may use a RSyslog Windows Agent Server Professional edition to receive data from 500 servers with Adiscon EventReporter installed on them.

5. Licensed remote file monitor Clients

RSyslog Windows Agent can be used to monitor text files on remote systems. This remote monitoring requires a proper license. RSyslog Windows Agent comes in several editions, each of them permitting remote file monitoring for a different number of remote systems, You must purchase the edition of RSyslog Windows Agent that reflects the number of different remote systems being monitored by RSyslog Windows Agent. The RSyslog Windows Agent Basic Edition does not permit you to monitor files locally or on remote systems. The RSyslog Windows Agent Professional Edition permits you to monitor files locally and on up to 10 remote systems. The RSyslog Windows Agent Enterprise Edition permits you to monitor files on an unlimited number of remote systems.

Please note that only the number of remote systems counts toward the license. So if you monitor 50 files on a single remote system, this counts as a single license. If you monitor a single file on each one of 50 remote systems, than this counts as 50 licenses.

6. Licensed remote event log monitor clients

RSyslog Windows Agent can be used to monitor Windows event logs on remote computers. A full RSyslog Windows Agent license is required for each remote computer on which Windows event logs are being monitored. Technically, the product might count licenses based on the number of remote event log monitors configured. In such cases, a license is required for each remote event log monitor configured.

7. Product Editions

A specific edition of the RSyslog Windows Agent product is licensed. Only the specifically licensed version may be used. For example, if a RSyslog Windows Agent Basic Edition is licensed, features of the Professional edition may not be used. The license keys are also technically different, that is a Basic edition license key is technically different from a Professional Edition license key. Thus, a Basic edition license key can not be used to unlock Professional features.

8. Evaluation period

The product comes with a free 30 day evaluation period. We strongly encourage all customers to evaluate the product’s fitness for their systems and environment during the evaluation period. Customer agrees to install the product on production systems only after it has proven to be acceptable on similar test systems.

9. Redistribution

Everybody is granted permission to redistribute the install set if the following criteria are meet:

– the install set, product and all documentation (including this license) are

supplied unaltered

– there is no registration key distributed along with the install set. REGISTRATION

KEYS ARE SOLE INTENDED FOR THE ORIGINAL CUSTOMER. IT IS COPYRIGHT

FRAUD TO DISTRIBUTE REGISTRATIONS KEYS.

– the redistributor is only allowed to charge a nominal fee if the product is included

into a commercial distribution set (e.g. shareware CD collection). For a CD collection,

we deem a fee of up to US$ 30 to be reasonable.

– redistribution as part of a book companion CD is OK, as long as the books purpose is

not only to cover a CD software collection in which case we deem a cost of US$ 50

for the book to be OK.

10. Disclaimer of warranty

The Software is provided “AS IS,” without a warranty of any kind. ALL EXPRESS OR IMPLIED REPRESENTATIONS AND WARRANTIES, INCLUDING ANY IMPLIED WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE OR NON-INFRINGEMENT, ARE HEREBY EXCLUDED.

11. Limitation of liability

ADISCON SHALL NOT BE LIABLE FOR ANY DAMAGES SUFFERED BY YOU OR ANY THIRD PARTY AS A RESULT OF USING OR DISTRIBUTING SOFTWARE. IN NO EVENT WILL ADISCON BE LIABLE FOR ANY LOST REVENUE, PROFIT OR DATA, OR FOR DIRECT, INDIRECT, SPECIAL, CONSEQUENTIAL, INCIDENTAL OR PUNITIVE DAMAGES, HOWEVER CAUSED AND REGARDLESS OF THE THEORY OF LIABILITY, ARISING OUT OF THE USE OF OR INABILITY TO USE SOFTWARE, EVEN IF ADISCON HAS BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES. IT IS THE CUSTOMERS RESPONSIBILITY, TO USE THE EVALUATION PERIOD TO MAKE SURE THE PRODUCT CAN RUN WITHOUT PROBLEMS IN CUSTOMERS ENVIRONMENT.

12. Severability

The user must assume the entire risk of using the program. IN NO EVENT WILL ADISCON BE LIABLE FOR ANY DAMAGES IN EXCESS OF THE AMOUNT ADISCON RECEIVED FROM YOU FOR A LICENSE TO THE SOFTWARE, EVEN IF ADISCON SHALL HAVE BEEN INFORMED OF THE POSSIBILITY OF SUCH DAMAGES, OR FOR ANY CLAIM BY ANY OTHER PARTY.

13. Termination

The License will terminate automatically if you fail to comply with the limitations described herein. On termination, you must destroy all copies of the Software.

14. Product Parts Not Covered by this License

RSyslog Windows Agent may include optional parts which are not covered by this license. For example, a free web-based interface for log access (phpLogCon) may be part of the RSyslog Windows Agent package. Any package not licensed under the term of this license will have prominent note as well as its own license document. If in doubt, the RSyslog Windows Agent service, the RSyslog Windows Agent configuration Program and the Interactive Syslog Server as well as accompanying documentation are licensed under this agreement.

14. High risk activities

The Software is not fault-tolerant and is not designed, manufactured or intended for use or resale as on-line control equipment in hazardous environments requiring fail-safe performance, such as in the operation of nuclear facilities, aircraft navigation or communication systems, air traffic control, direct life support machines, or weapons systems, in which the failure of the Software could lead directly to death, personal injury, or severe physical or environmental damage (“High Risk Activities”). Adiscon specifically disclaims any express or implied warranty of fitness for High Risk Activities.

15. General Provisions

This license agreement shall be governed and interpreted in accordance with the substantive law of Germany applicable to contracts made and performed there. The place of performance of the agreement is Germany, Grossrinderfeld notwithstanding where the Customer is situated or any servers are located.

If any provision of this license agreement shall be held void or unenforceable by a court of competent jurisdiction, it shall be severed from this agreement and shall not affect the remaining provisions. Void clauses are to be construed in such a way that the business purpose of said clauses as envisaged by both parties can be realized in a lawful manner. Except as expressly set forth in this agreement, the exercise by either party of any of its remedies under this agreement will be without prejudice to its other remedies under this agreement or otherwise.